Early attempts at integrating AI in Search

Neeva, You and other search engines have embedded AI in their search results and give us an idea what the future of search could look like.

Sources say Microsoft plans to integrate Chat GPT with Bing and potentially office applications like Word, Powerpoint and Excel before the end of March [source].

The rumors come on top of loud voices on social media saying Google is doomed because AI will answer questions in the future. Many call Chat GPT the “Google killer”.

I’m highly skeptical about generative AI like Chat GPT being ready to integrate with search, and I wonder if bringing generative AI to MS Office might mean document summaries as Google has planned for Google Docs. However, last week the Google challengers Neeva and You added Chat GPT-like beta features. Despite being early MVPs (minimum viable products), they could give us a good idea of what AI in Search might look like moving forward.

From many answers to one

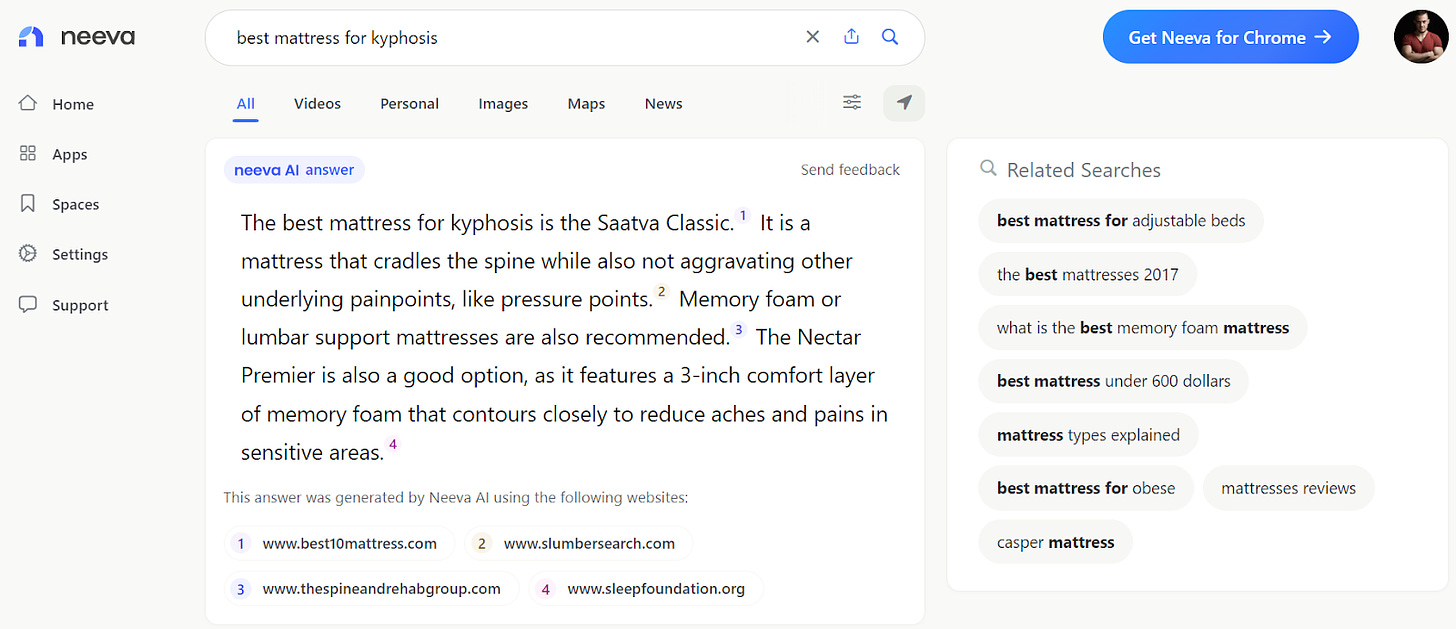

Searching for a question on Neeva now features a new AI answer feature showing a single result that cites several sources. It’s like a “stitched-together featured snippet”. Organic results still appear below the AI-generated answer in the normal fashion we’re used to.

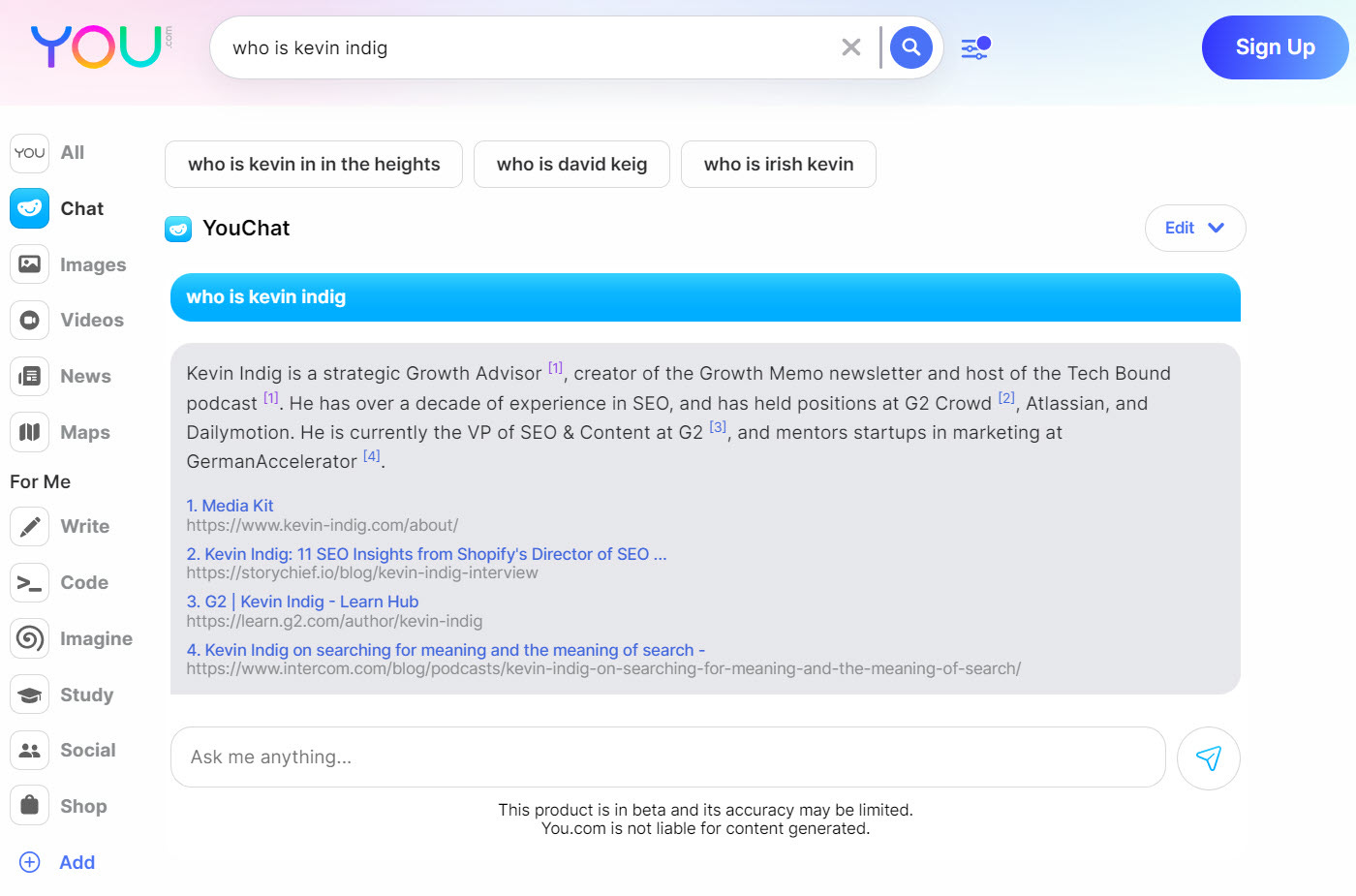

On You Chat, I found the same implementation (not sure who came up with it first), though not all results had citations.

One big fear of marketers and companies is that a search engine that looks like Chat GPT would not send traffic to sites anymore, but that’s not the case in early MVPs. Searchers do get a single answer but can dig deeper with citations. Besides the potential legal implications of not citing sources, a good search experience has to allow users to do their own research. Searchers want to compare results and educate themselves when evaluating big decisions or expensive purchases. So, a single answer without further results might actually be a bad experience when it’s not about quick facts.

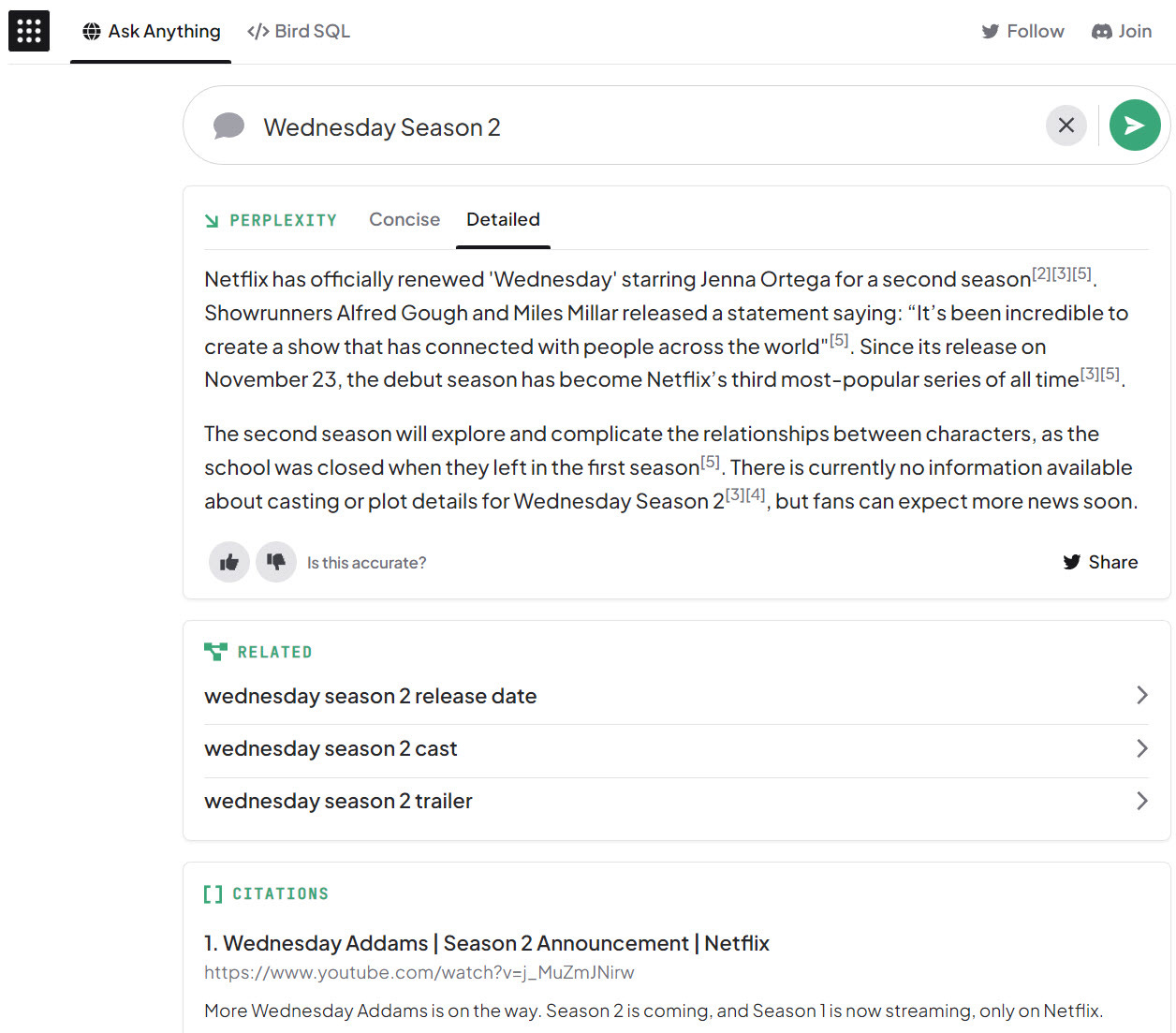

Another mix of chatbot and search engine is Perplexity AI, which pairs an LLM (Large Language Model) from OpenAI with Bing search results. Other than Neeva or You Chat, Perplexity AI allows users to pick between concise and detailed answers.

If the single answer plus citations style sticks, it would transfer Search from many results by many sites to one result by many sites. We need a lot more data to make an informed decision, but cited search results could squeeze traffic from search engines even more than the implementation of SERP Features already has. It would also bring the idea of Google closer to its roots since the concept for PageRank was built on the idea of citations.

AI answers with citations is a concept that can be transferred to News and Video results as well. Google News, for example, could summarize the news of the day in a few paragraphs and cite sources. Generative AI could summarize the best moments within videos, like a news ticker or reel for any question. For example, when you search for “US Congress”, you could get a quick 1-minute video that cuts into several other videos with key moments. Google already surfaces key moments in search, and YouTube shows you peak engagement across videos.

When asked whether the AI was connected to the web, Sridhar Ramaswamy (Founder and CEO of Neeva) said, “[...] yep, this is a deep integration of LLMs at every stage of search: query rewrite, retrieval, ranking and result generation!”

Serving monetizable queries with AI

Another exciting element of early generative AI integrations is the fact that they can serve monetizable queries. Chat GPT didn’t return results about products or services because it wasn’t trained on the web. Neeva AI, You Chat, and Perplexity AI, however, are trained on web results and can surface information about products and services.

Queries have different degrees of monetizability. Not every search can be monetized with ads because not every search indicates users being on the market. For example, when searching for “weather tomorrow”, people want to know the weather. They’re likely not in the market to buy an umbrella, even if the result is “rainy”.

Searching for “men’s winter boots”, on the other hand, is a strong signal searchers want to buy. From a search engine perspective, the second query is much easier to monetize because advertisers likely address buyers. The closer a query is to a buyer intent, the better it can be monetized because a) the intent is clearer, which b) increases the chance of happy advertisers and c) competition is bigger, so advertisers outbid each other.

As a result, AI answers with citations could surface a single recommendation for a product or service in the future but serve more results in case searchers want to compare. In this search experience, advertisers can likely not bid on the single (best) answer but still on the following results. I don’t think Shopping Search will ever just feature a single answer without advertising options.

The real danger to Google

As a second-order result of ads having a future in a world of AI answers, I don’t think Google’s business model is in grave danger from generative AI. The risk is higher for questions that weren’t easy to monetize in the first place. The bigger danger for Google is a company like Open AI providing a better search experience for simple questions and using that attention to build an advertising-driven search experience on top.

Another risk for Google comes from the corner of fine-tuned LLMs for specific verticals. Google has lost significant market share in shopping Search to Amazon. Search is split into many verticals, like jobs, homes, recipes, health, etc., but shopping is one of the most lucrative. One of my contacts explained to me that for years, Google has specialized teams by vertical. The reason is that search preferences are different (which is also why ranking signals are probably weighed differently from vertical to vertical). For example, users might prefer more visual results when shopping for products, while health-related searches need to be created by experts in the field. LLMs could be fine-tuned for specific verticals and return much better results faster, which would tear Google as a single search engine further apart.

One way Google could disrupt its own business model is by licensing LLMs to developers. Similar to how Amazon AWS provides cloud hosting to tech startups, new companies could be built on top of LLMs. AWS is estimated to be worth one trillion USD, and Google’s market cap is a bit more than that at the moment. If Google provided the foundation of LLMs through Google Cloud, it might not have to care about search as much anymore.

However, I wouldn’t be so quick to discount Google, which has developed the concept of transformers, which pose the foundation of generative AI. We haven’t even seen the power of LaMDA and other Google LLMs yet. With the addition of E for Experience to Google’s search quality concept EAT (Expertise, Authoritativeness, Trustworthiness), Google prepares to surface more results in Search that cannot be created by AI.

On top of that, the results from generative AI can still be questionable and sometimes wrong and biased. LLMs are expensive to maintain (Chat GPT costs $100,000 a day to maintain for one million users), slow to update, and sometimes slow to run. We haven’t even spoken about the legal implications of training models on the public web. At least, we now have an idea of what AI search could look like.