How to rock SEO in a machine learning world

Google uses more and more machine learning in Search. In this article, I explain how to thrive in this world.

The biggest threat to SEOs is missing substantial changes in the industry. If you are not aware of how machine learning already impacts SEO you’re at a high risk of making the wrong decision for your company or client. The time to be ahead of the curve is now.

In HipHop, they say “the game has changed”.

That’s why I spoke at Digital Summit in Denver about exactly that topic.

How to reach SEO level 9000 in a machine learning world from Kevin Indig

The research for the presentation left me with a ton of references and resources, which I didn’t want to throw away. Hence this article. If you want to check them out right away, scroll to the bottom of this article.

Before we dive deep into the topic, two caveats:

I’m not going to provide a general introduction to machine learning. Others, like Eric Enge, Udacity, the NYTIMES, Jeff Dean or Mike King, can do that much better.

Please be aware that we’re not going to wake up in the Terminator apocalypse tomorrow. Machine learning and artificial intelligence are still very much in their infancy. It’s only used sparingly in SEO. Yet.

Artificial intelligence will substantially change the world – and Google

The world is spinning faster and faster. The iPhone just turned (only) 10. Only 13 years after it started, Facebook has reached 2 billion users. Google has reached a level of data size and processing capability, at which they’re able to make almost daily updates to their algorithm(s). Machine learning will only accelerate that development.

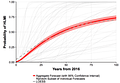

In a recently published study [1] (May 2017), scientists from Oxford and Yale asked 1,600 AI experts when they thought artificial intelligence could reach human-like intelligence.

The participants also estimated that machines will be better than humans by

2024 in translation

2026 in writing a high school essay

2027 in driving a truck

2031 in selling in retail

2049 in writing a bestseller

2053 in performing surgery on a human being

Of course, the usual suspects, from Facebook to Amazon, drive this trend. And of course, Google. It started with the acquisition of DeepMind [2], went on with Sundar Pichai stating that Google moved from being “a mobile-first company to an AI-first company” and reached a tipping point in Amit Singhal’s replacement by John Giannandrea in 2015 [3]. Amit was a strong proponent of classic information retrieval. According to people who worked with him on the search algorithm, his intuition for search quality was so good they just had to build the algorithm around it. That has now changed with Giannandrea, a clear driver of AI, as the new head of search.

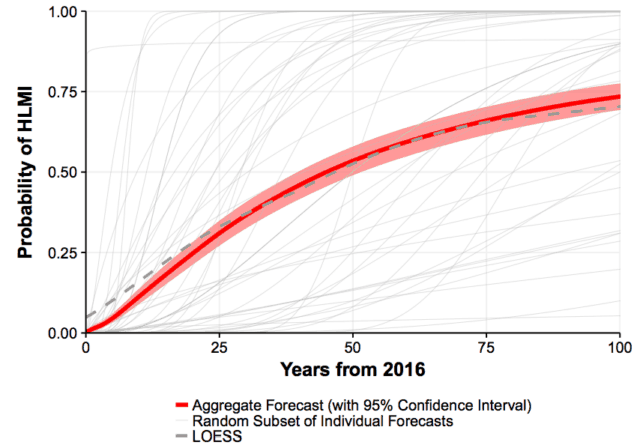

The acquisition of Deepmind accelerated the use of machine learning at Google. A video from Jeff Dean about Deep Learning [4] shows its exponential growth starting in 2014 (acquisition of Deepmind).

Be aware that this graph ends in Q2 2016, which is now about a year old. So you can imagine how much further Google integrated machine learning.

That leaves us in a weird kind of transition from classic information retrieval to machine learning. We’re at a tipping point at which it’s important to understand what’s coming at us so we can adjust and reposition ourselves.

So the question is: what does SEO look like in a world that’s on the edge of AI-driven search?

Optimize for user intent

In 2017 you have no chance to rank if you don’t hit the user intent with the right format. Users have clear expectations of what type of content they want for a certain query. But it’s not always obvious what their expectation is. Luckily, the SERPs give us a good idea.

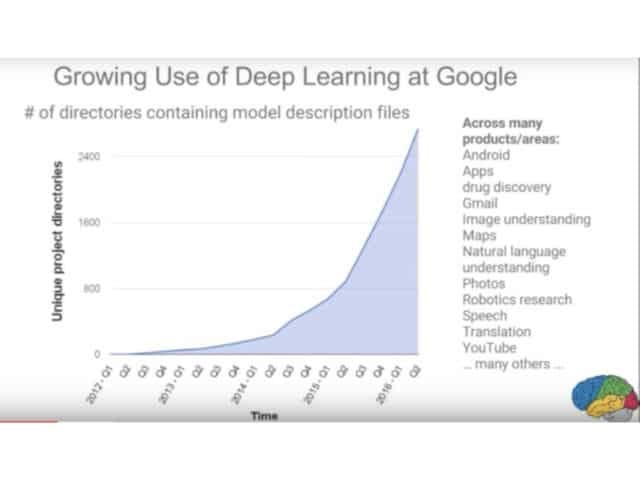

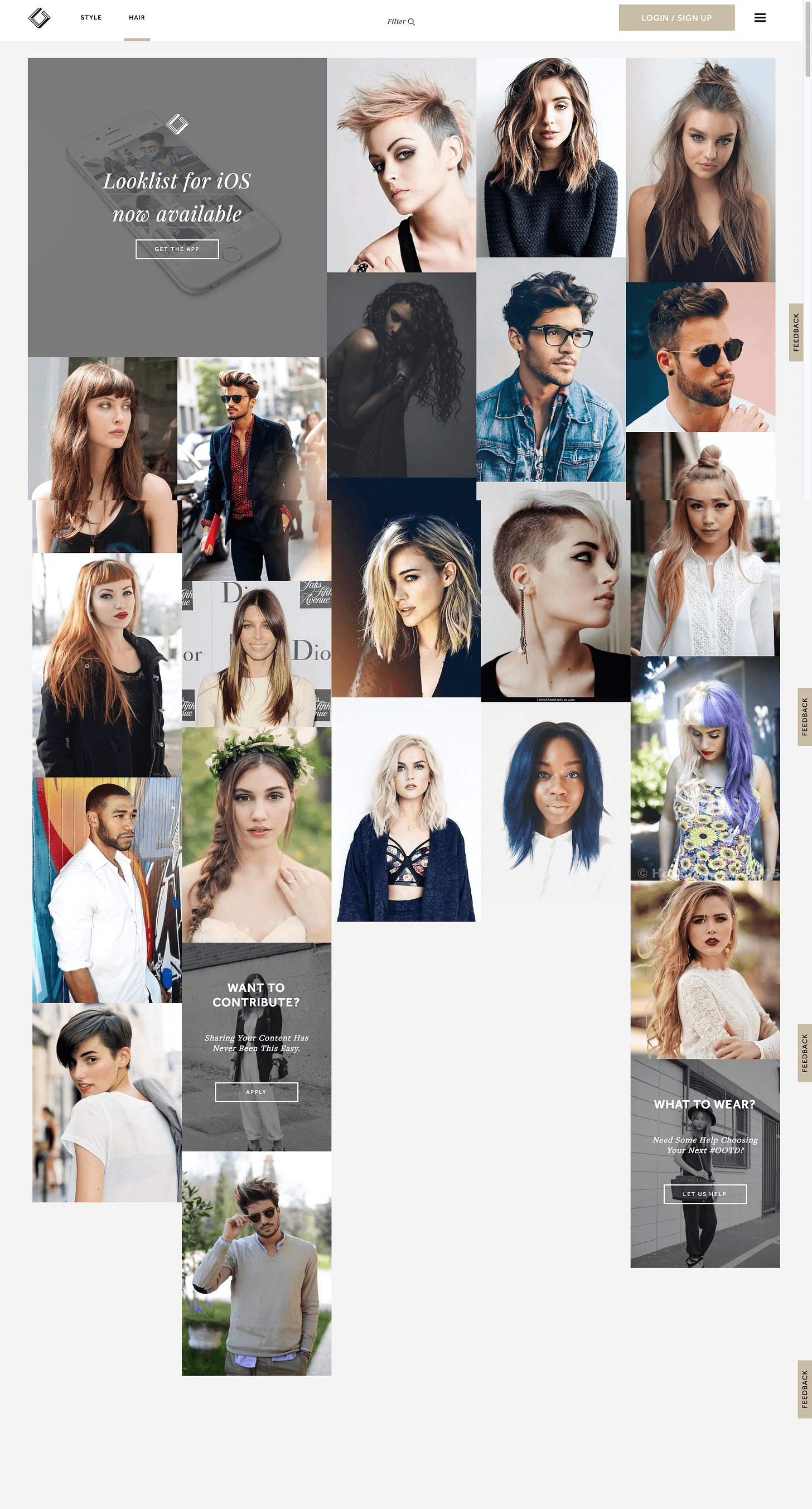

Look at the SERP for “hairstyle inspiration”.

Users are looking for inspiration behind this query. And they want it in the form of images. If your page for that keyword is not image-heavy, you won’t rank. Of course, Google shows an image search integration pretty high on the SERP, which is a hint for that.

By the way, the site www.lookli.st figured that out pretty well and built a whole app around this user intent. They have not a single line of text on the page/site and rank #4 for “hairstyle inspiration”. This is a nice anecdote for content not having to be text. Content can be images (Pinterest), videos (Youtube) or an app (Looklist).

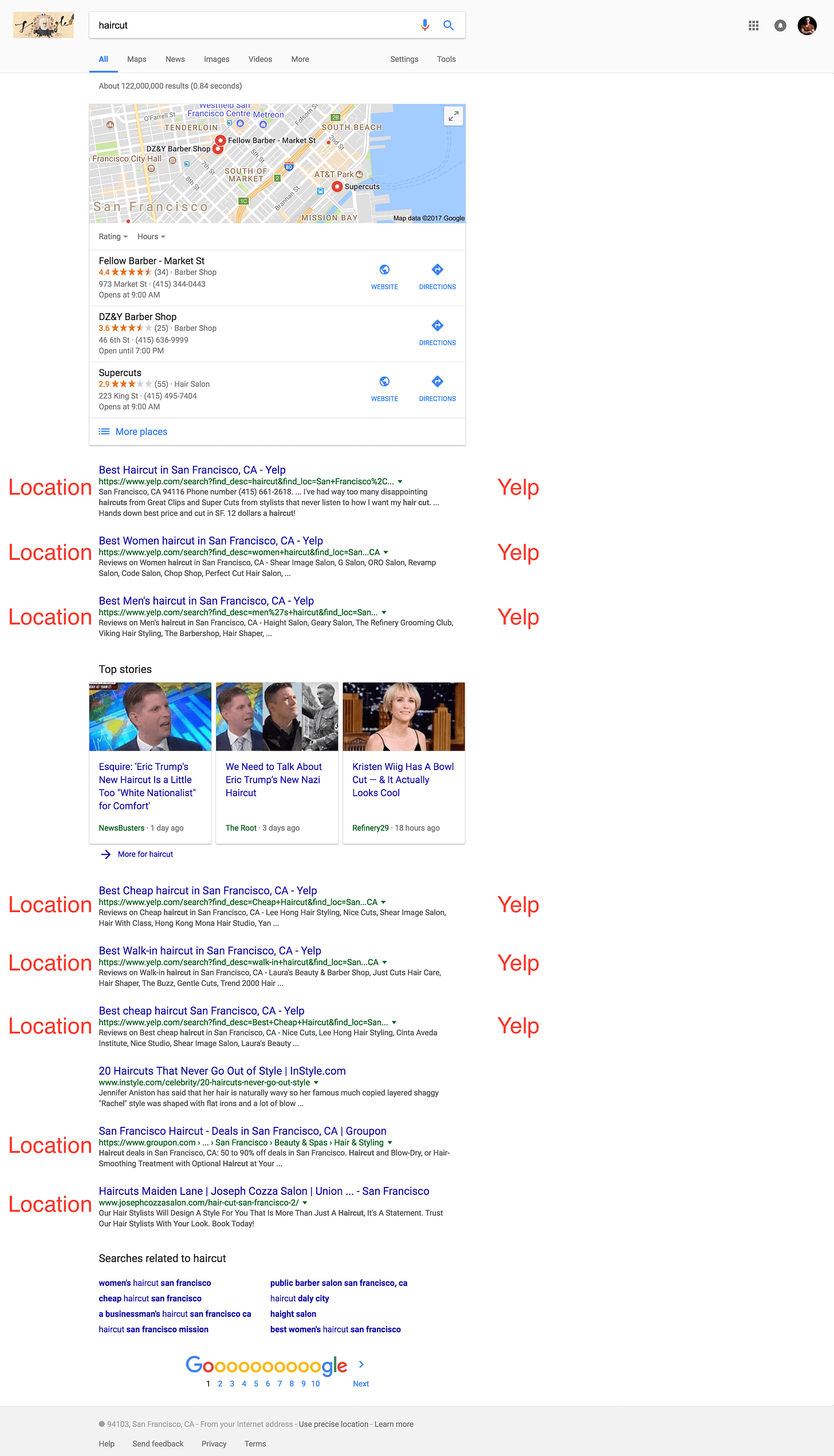

Another example of Google clearly having figured out the user intent for a search query is “haircut”. Users want to actually get a haircut when they search for “haircut”. They don’t want a definition of the word, the history of haircuts or know what the best haircuts are. It’s location-driven user intent.

Google even shows the map on top of the SERP to navigate people to the next location right away. Another “hint”. What’s fascinating me at this point is that 6 out of 9 organic results are from Yelp.

The query “straighten hair” has a learning-driven user intent. People want to learn the best way to straighten hair. Therefore, Google shows tutorials for 9 out of 10 results. If your content is not structured around a tutorial, you won’t rank.

Google even tries to show a tutorial itself in the Featured Snippet box (hint).

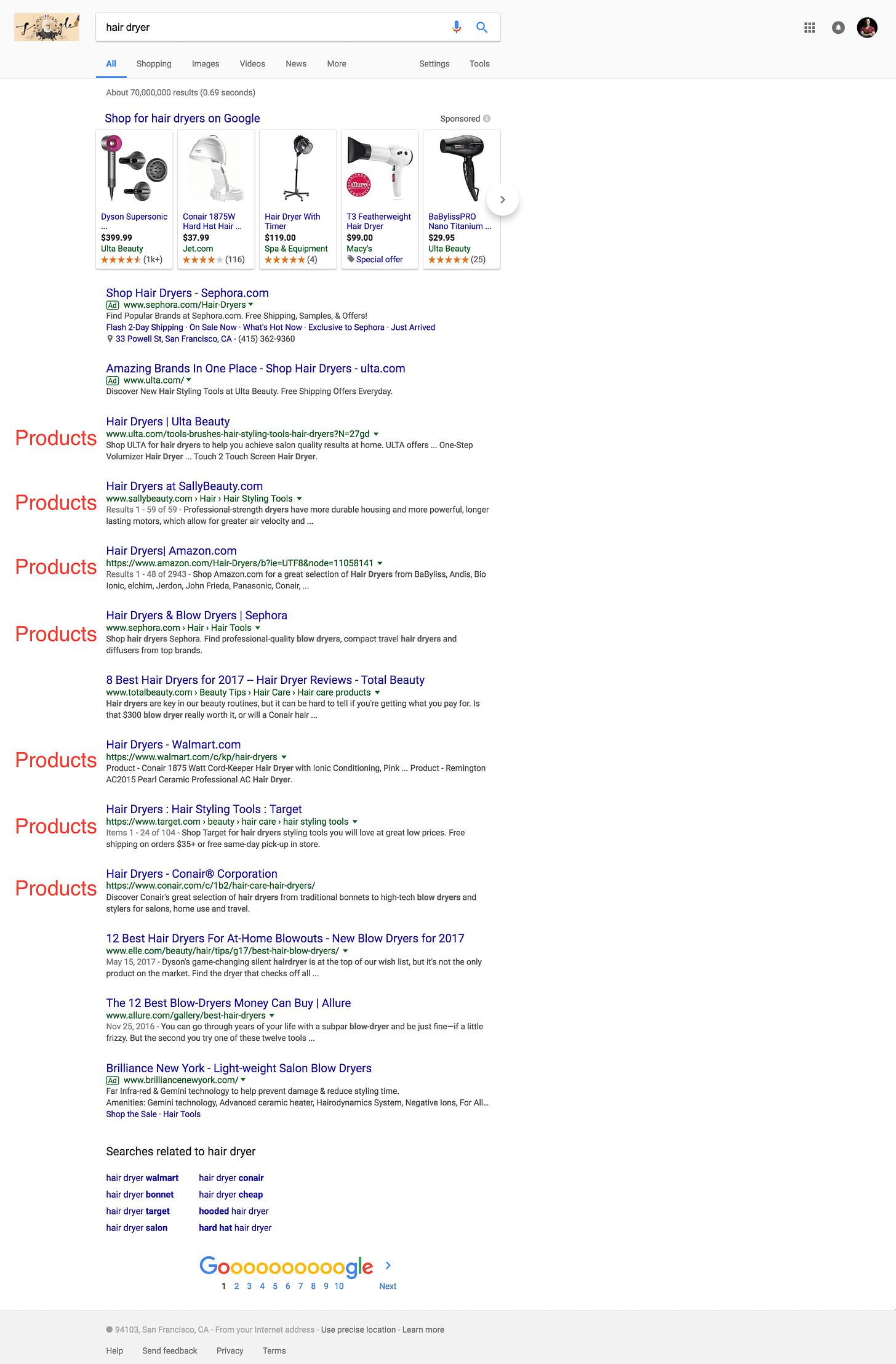

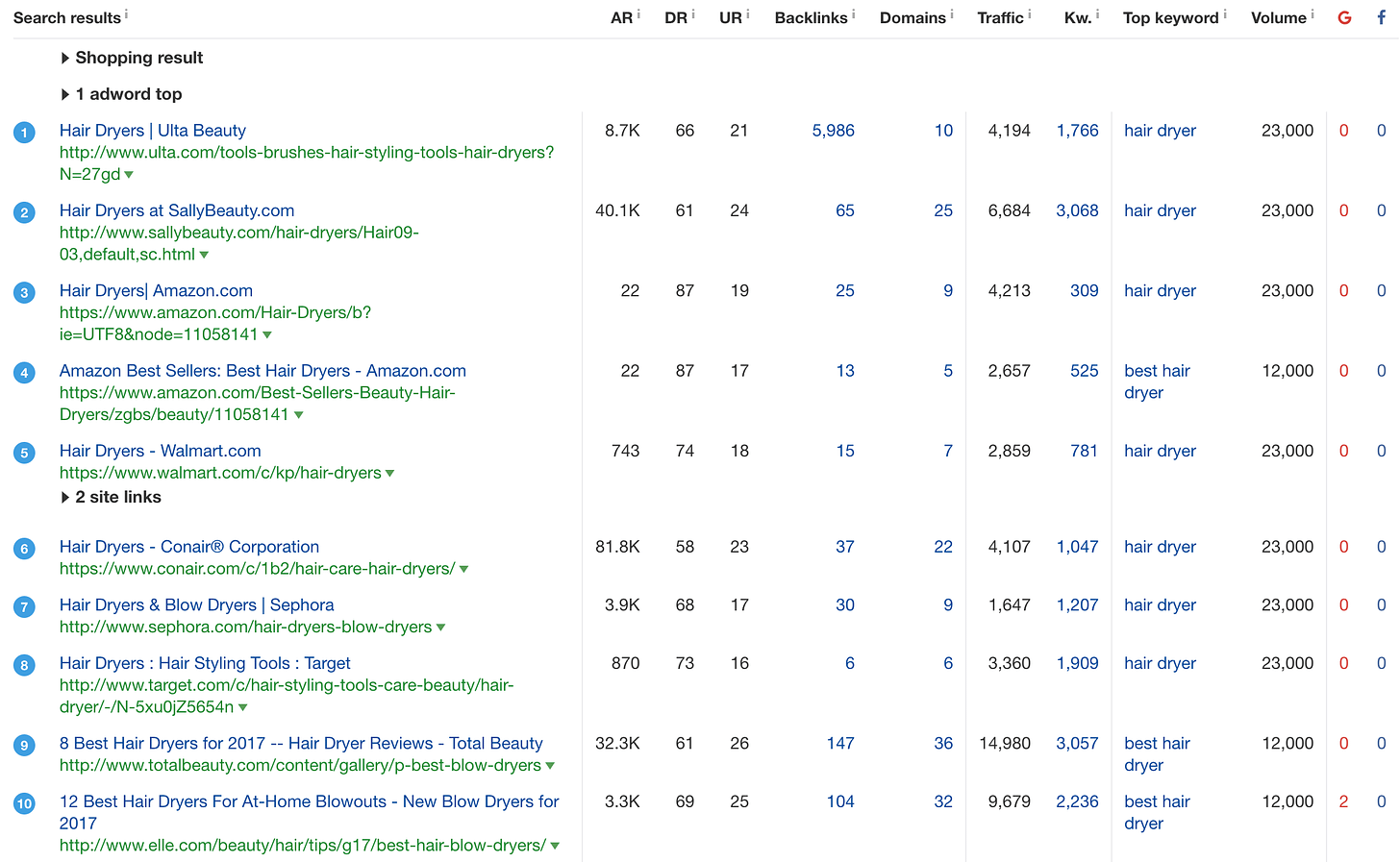

One last example to make the point: people looking for “hairdryer” want to buy one.

Seven out of 10 results are pages you can buy hairdryers on. Only three results are comparing hair dryers. Of course, Google puts a Shopping integration on the top of the SERP (hint).

So you get the idea: wrong format = no ranking. The minimum viable thing you should do is perform a quick search for the keyword you want to rank for and see if you can satisfy user intent with your content. As we’ve also learned, Google is giving us hints in the SERPs for what kind of content they expect for a given query.

The next logical question should be “what should I do, once I identified user intent?”

Create holistic content

Holistic content is characterized by covering a topic in its entirety, with all related subtopics. That means when you write about the topic “car”, you need to cover attributes (speed, price, horsepower), parts (tires, windshield wipers seats), brands (Audi, Porsche, Toyota) and features (air conditioning, leather seats, sunroof).

Ask yourself what the classifications [12] within the topic you’re writing about are. Say you’re writing about an animal. An animal is part of a specific species, has a size and attributes like a snout or a tail. Or you write about a city, which also has a size but also an inhabitant count, relationship to a state and country and landmarks.

In machine learning, those classifications are called entities. Google’s goal is to understand the relationship between those entities.

Jeff Dean, the machine learning uber guru at Google, explains this principle in the video (starting 32:19) I mentioned before [4].

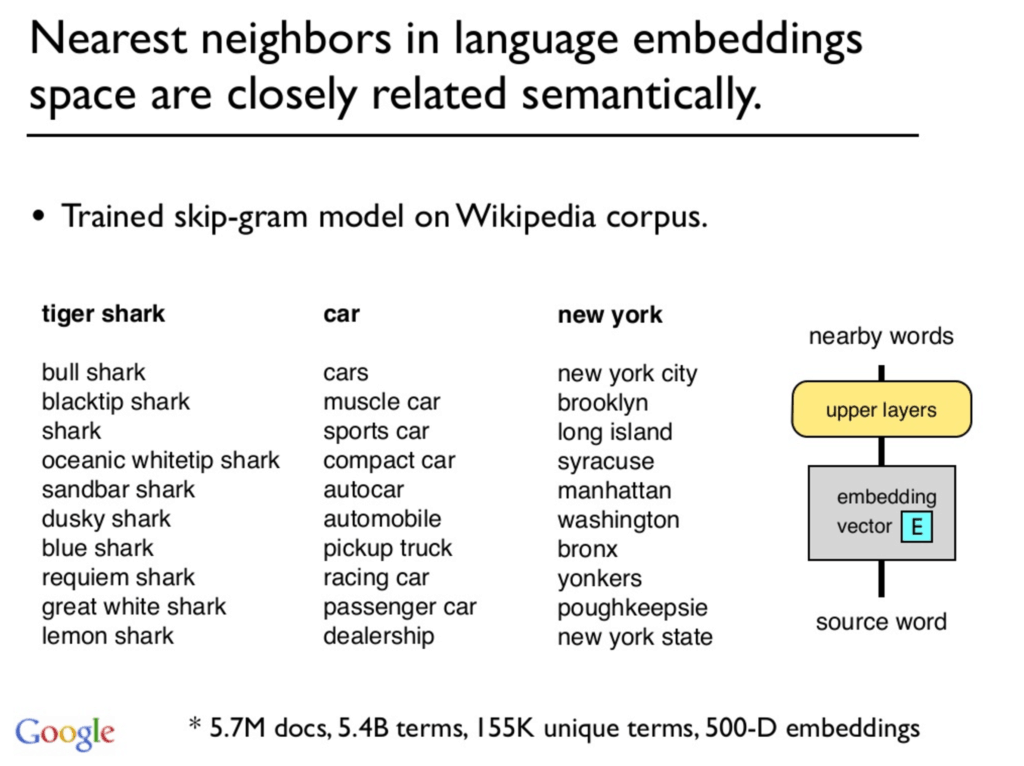

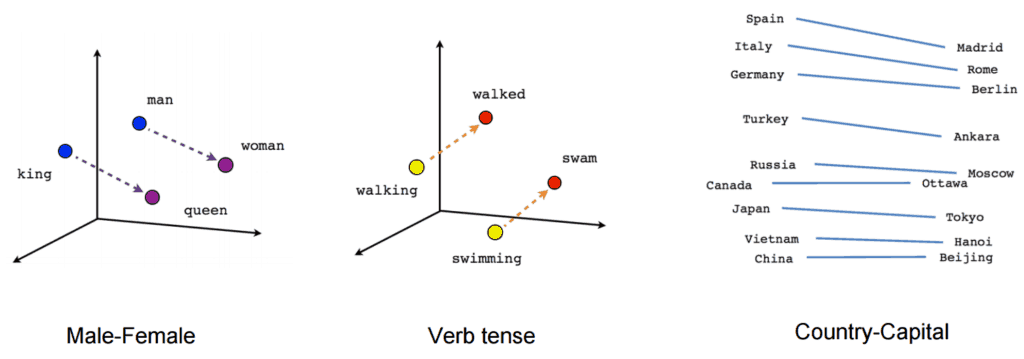

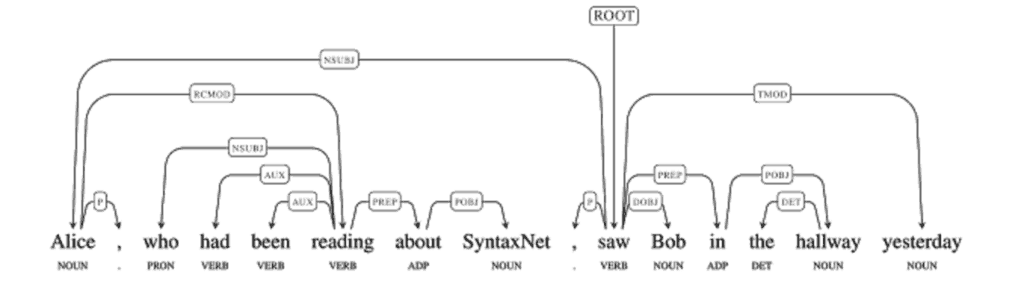

Google is using the Natural Language Processing frameworks Word2Vec [5] and SyntaxNET [7] in TensorFlow to understand the relationship between words. Word2Vec allows you to put terms on a vector and calculate the distance between them (very simplified).

By the way, our friend Jeff Dean [9] was part of writing the original patent ;-).

SyntaxNET helps Google to understand the syntax of sentences. This is crucial to understand the relationship between entities.

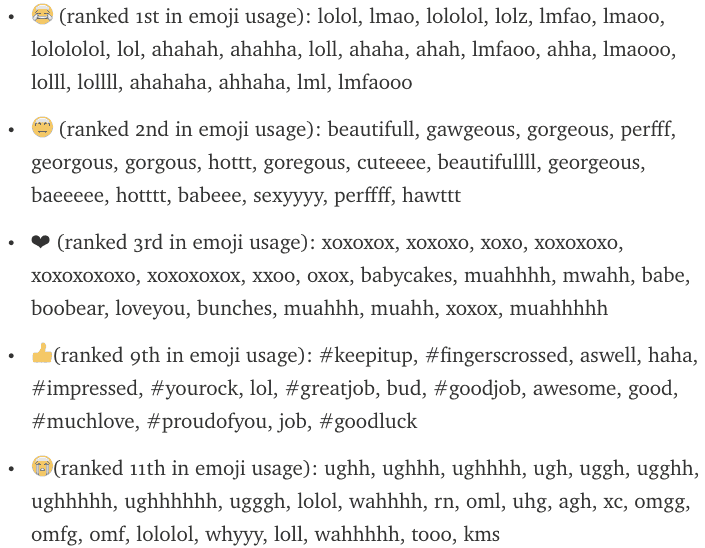

Instagram recently used Word2Vec [6] to understand the context (meaning) of Emojis, which are becoming their own language.

That allowed them to understand how users interpret emojis. For example, the praying hands emoji was originally concepted as a high five, but people use it to express gratefulness.

For Google, understanding the meaning behind a topic or keyword is a huge challenge and we can make it easier for them by creating holistic content.

Besides covering all relevant entities, Holism is also characterized by depth and width. “Depth” means how detailed and extensive you cover a topic, “width” means how much you put it into context and cover related topics. One way to achieve that is to consolidate content, i.e. by merging several articles that cover related topics into one big monster article.

Holistic content is also curated, meaning it’s up to date and contains all relevant pieces and references. In concrete terms that means you have to regularly check your content for information that’s outdated and information that’s new but missing on your page.

You also want to make sure to use different types of content on the page (images, videos, social media integrations like tweets and IG posts, apps, etc.) to provide the best user experience. As we’ve learned, user intent leads the way here.

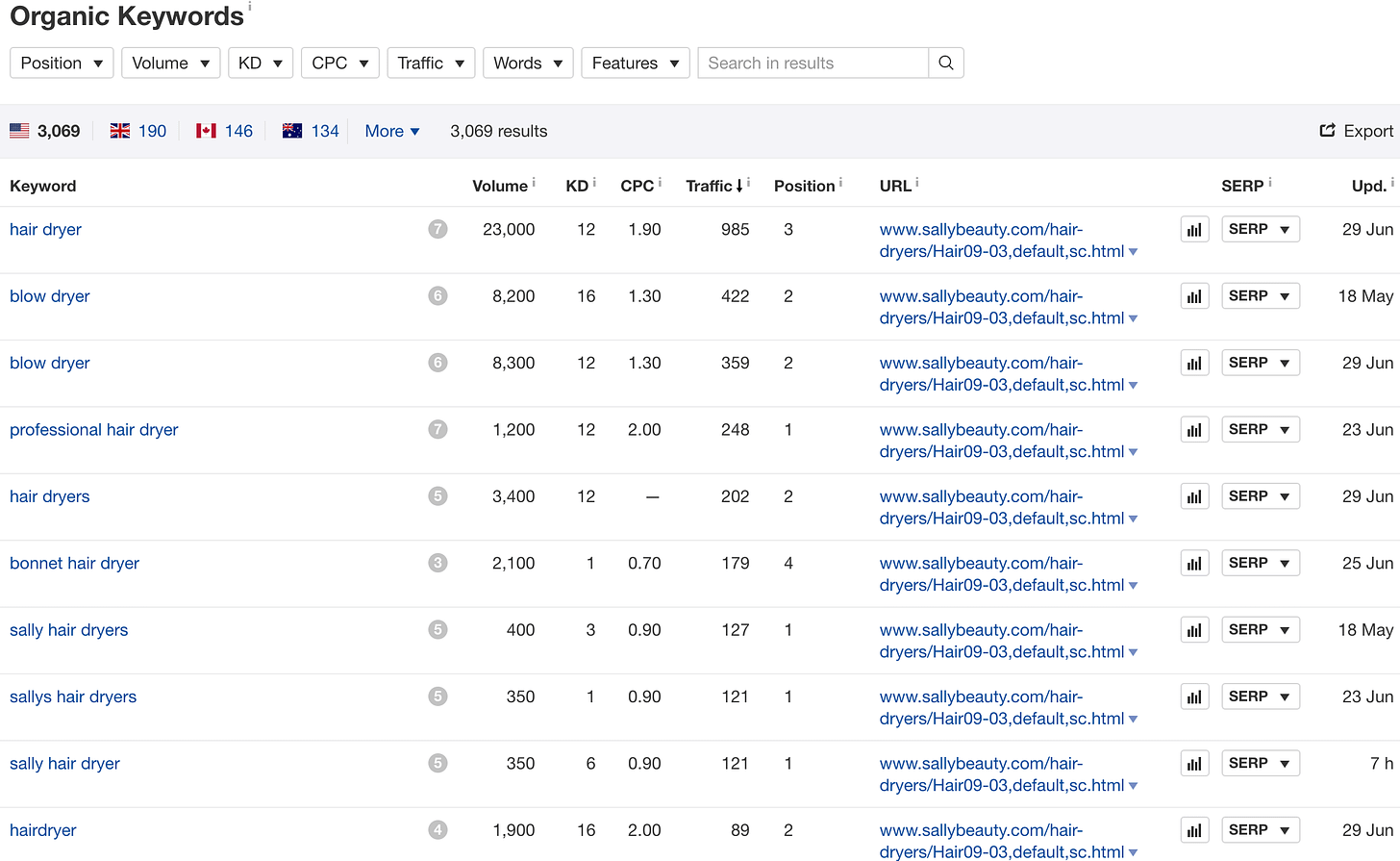

A good signal to see how holistic your content is is to check how many keywords it ranks for. To stick to the hair examples, look at how many keywords the first result for “hairdryer” ranks for. 1,766! With one page! The second result, from Sallybeauty, ranks for 3,068 keywords!

When looking at those keywords, it becomes apparent that Google ranks the page for all kinds of variations and related keywords to “hairdryer”.

We started seeing that trend since Google overhauled its core algorithm and called it “Hummingbird” in 2013. It has become much better at understanding context and meaning ever since. The engine responsible for that understanding is RankBrain [10]. I’d put a lot of money on the theory that Google’s understanding will skyrocket over the next 12-24 months.

Google pushes for holistic content because it’s the best experience for users. Speaking of users…

Focus on user signals

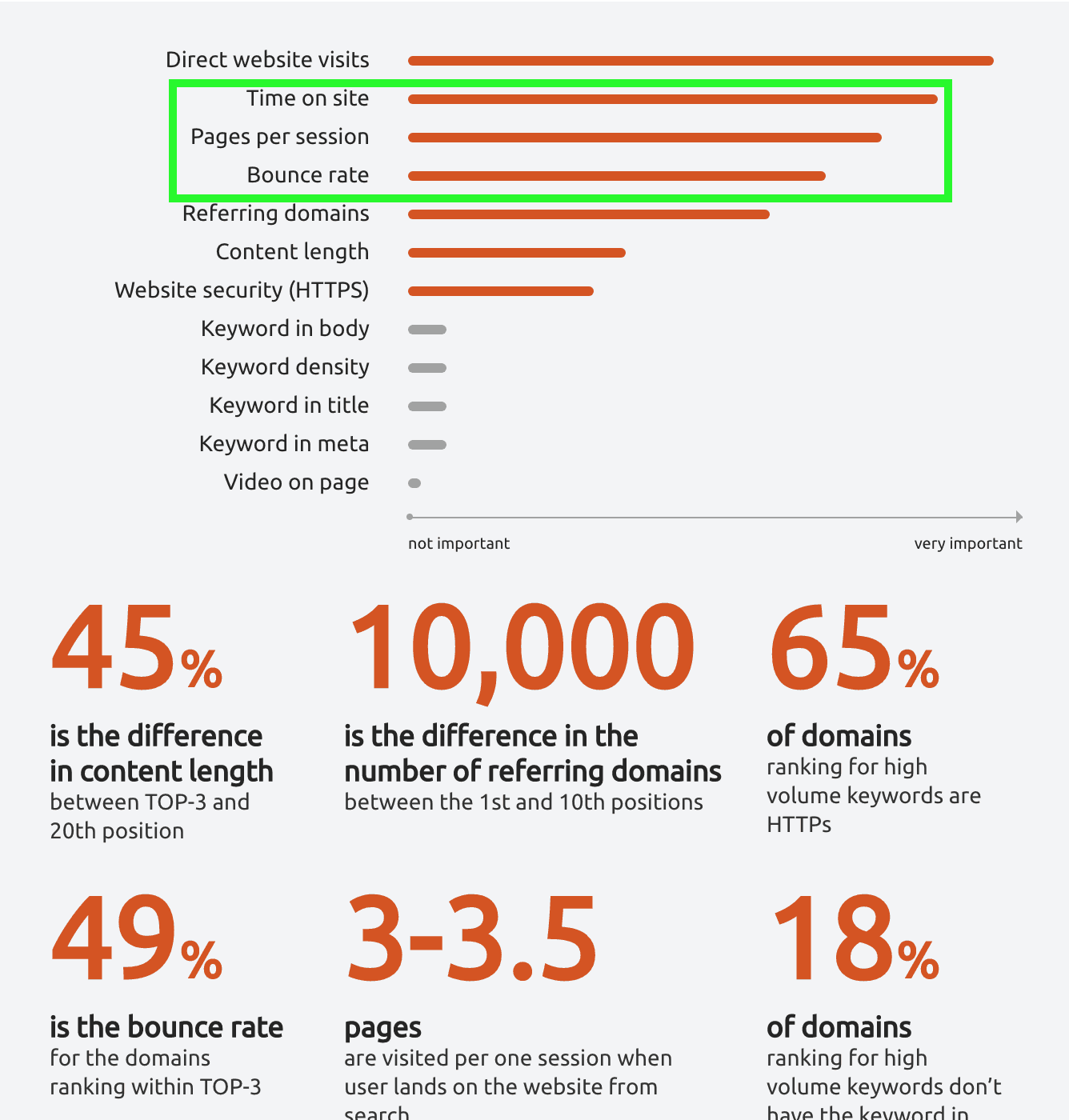

User (behavior) signals are telling us how satisfied people coming to our site (from organic search) are with what they see. Are we solving the problem the user came to us for? How much Google uses those signals is controversial [13]. Recent ranking factor studies from Searchmetrics [8] and SEMrush both found correlations between user signals with higher rankings.

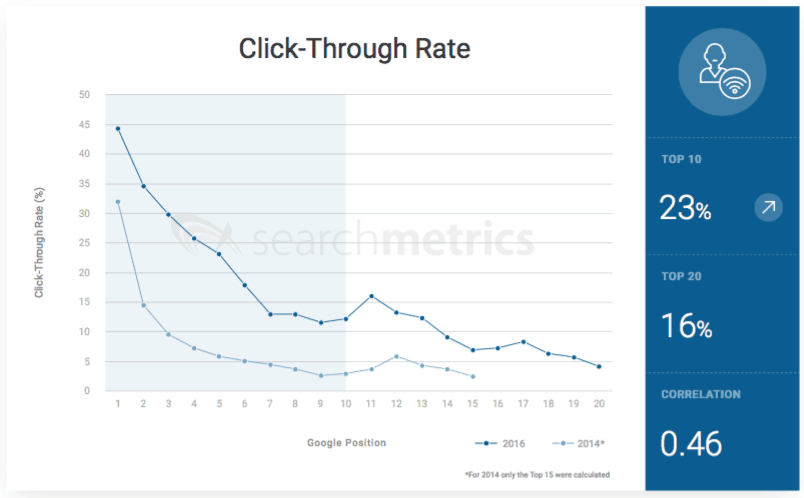

Especially click-through rate (from the SERPs) seems to have a high weight in ranking. It scored as strongest ranking factor in Searchmetrics’ last study.

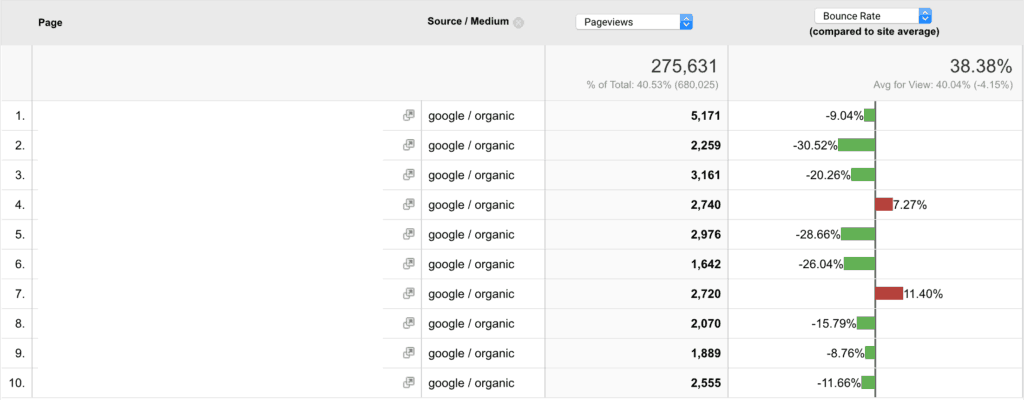

Time on site, pages per session and bounce rate are also worth a look. It’s important to segment these metrics, meaning dividing them further up, to get a better understanding. It’s not enough to look at bounce rate, for example. Instead, look at the bounce rate per device or country.

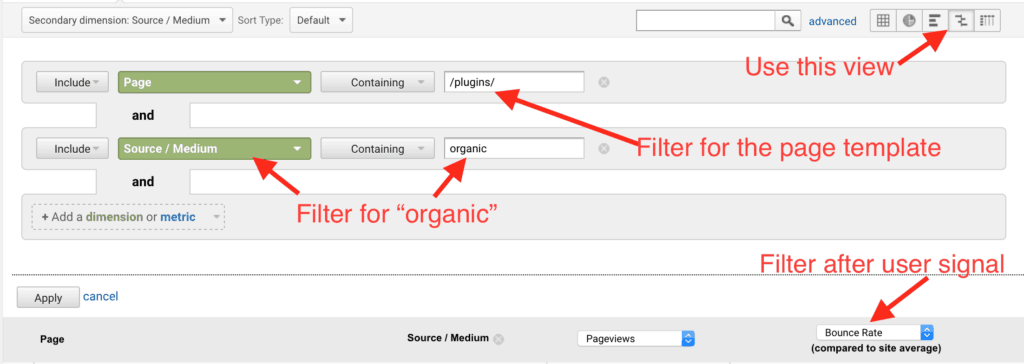

Make sure to compare user signals for pages against the average of the whole site or, even better, against pages with the same template. Also, look at user signals over a longer time period than 7 days. The last 30 days are a bare minimum, after my mind. Otherwise, it’s hard to spot seasonality and get enough data for a representative sample.

In Google Analytics, it would look like this:

As you can see in the example, two pages (I blanked the URLs) are sticking out with a higher-than-average bounce rate.

For those pages, it’s worth investigating what the problem could be. A hypothesis could be:

The page ranks for keywords it’s not relevant for (happens less and less)

The page is not delivering what it promises

The product / main content is bad

…

This process can only raise more questions, but it will lead you to the core problem. Improving bad user signals is key to SEO success – now and in the future.

When putting together my presentation, I also got curious about how backlinks would stack up against user signals and content. I started in an era in which it was enough to bomb a page with backlinks to rank for all sorts of keywords. That obviously changed. So I did a little mini-study, looking at four high search volume keywords and comparing their backlink profile to their ranking. To get the best view of the data, I used AHREFs, SEMrush and Moz data.

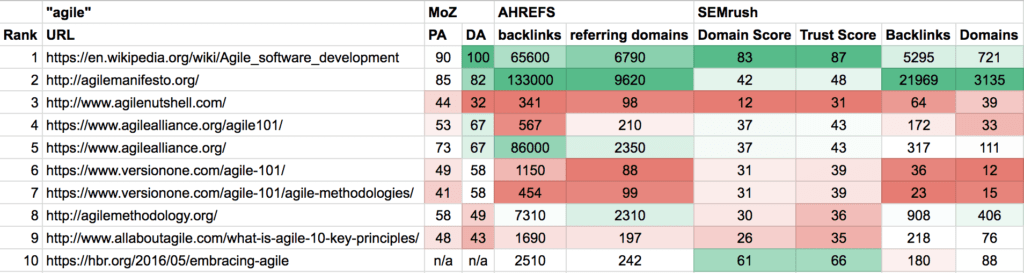

The first keyword I looked at was “agile” (~50K monthly searches), for which the first two results have the strongest backlink profiles. The ones on #2-10, however, don’t have strong backlink profiles at all!

The content on agilenutshell.com (third site on the list) is even super thin! It has only two sentences and two images – but the user experience is great and I’m sure lots of people click through the microsite. So this site ranks neither because of content nor backlinks.

User satisfaction: 1, backlinks: 0

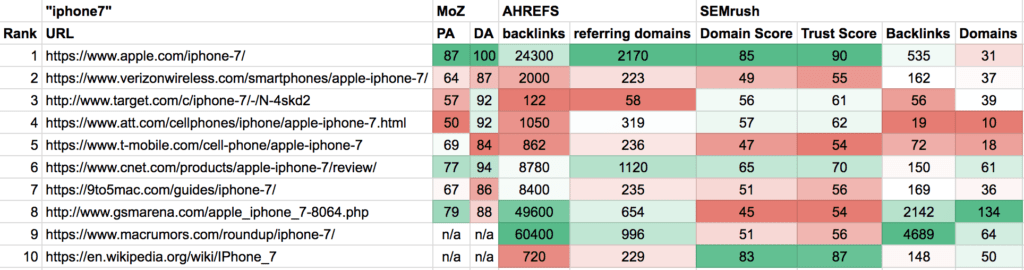

Next, I looked at “iPhone 7”, a keyword with ~277K monthly searches. We see a similar pattern. The first result has a very strong backlink profile. It makes sense since Apple is the producer of the iPhone (and the strongest brand in the world). But then it becomes interesting again: #2-5 have weak backlinks profiles.

User signals: 2, backlinks: 1

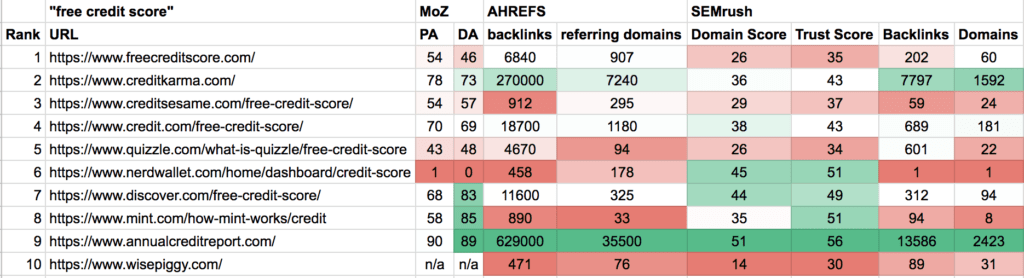

“Free credit score” has ~ 250K monthly searches but shows a different picture. The result with the strongest backlink profile on #9!

In fact, most results ranking on top are weak in terms of backlinks, instead of maybe #2. But given the fact that the credit/finance industry is probably the most competitive (and lucrative) one, this result is astonishing!

User satisfaction: 3, backlinks: 1

Finally, let’s look at the most searched-for keyword in this mini-study with ~450K monthly searches, “auto loan calculator”.

Once again we find a very diversified picture for the ranking of the top10 pages and only the top result with the strongest profile.

Bottom line: it seems that you still need a strong backlink profile to rank #1 but not for #2-10.

You still need a strong backlink profile to rank #1 but not for #2-10.Click To Tweet

If I had to hypothesize, I’d say that machine learning opens the way for Google to handle huge masses of data and therefore more feasible to use user behavior data. Google representatives used to say that this data was “too noisy” but I personally believe this becomes less and less of an issue.

Test how ranking factors apply to you

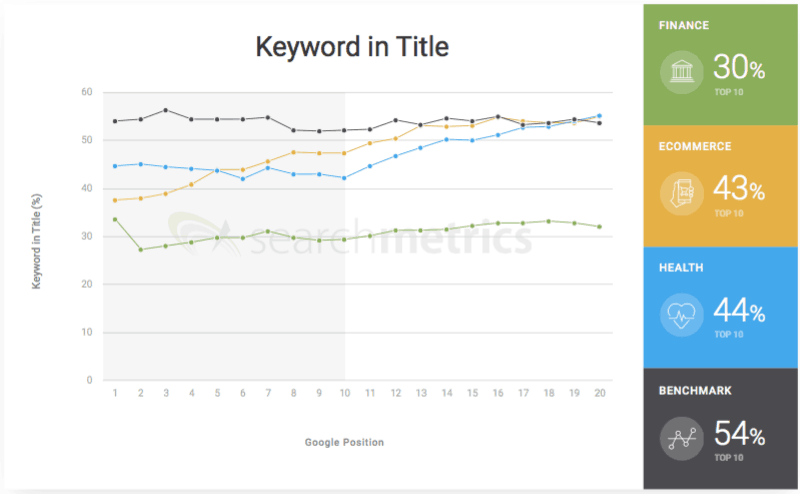

Another huge change in the SEO industry is the move from general ranking factors to industry-specific ranking factors. Say what? Yes! Studies like the recent ones from Searchmetrics are starting to show how ranking factors seem to be weighted differently from industry to industry.

For example, the ranking factor “keyword in title” applies differently to the Health industry compared to the finance industry. That’s substantial! From a logical standpoint of view, it makes sense. I have no data to back this up, but I deem it possible that this trend will intensify and that’s why it’s important to test how certain ranking factors apply to your website and industry*.

*You can’t measure the impact on the whole industry but at least on the keywords you rank for.

As SEOs, we want to know what moves the needle. We share our knowledge with the “scene” and colleagues in the hope that we all make each other smarter. Everyone wins. But when the same rules don’t apply to every industry, we have to find ways to experiment within the industry. That’s where testing comes into play.

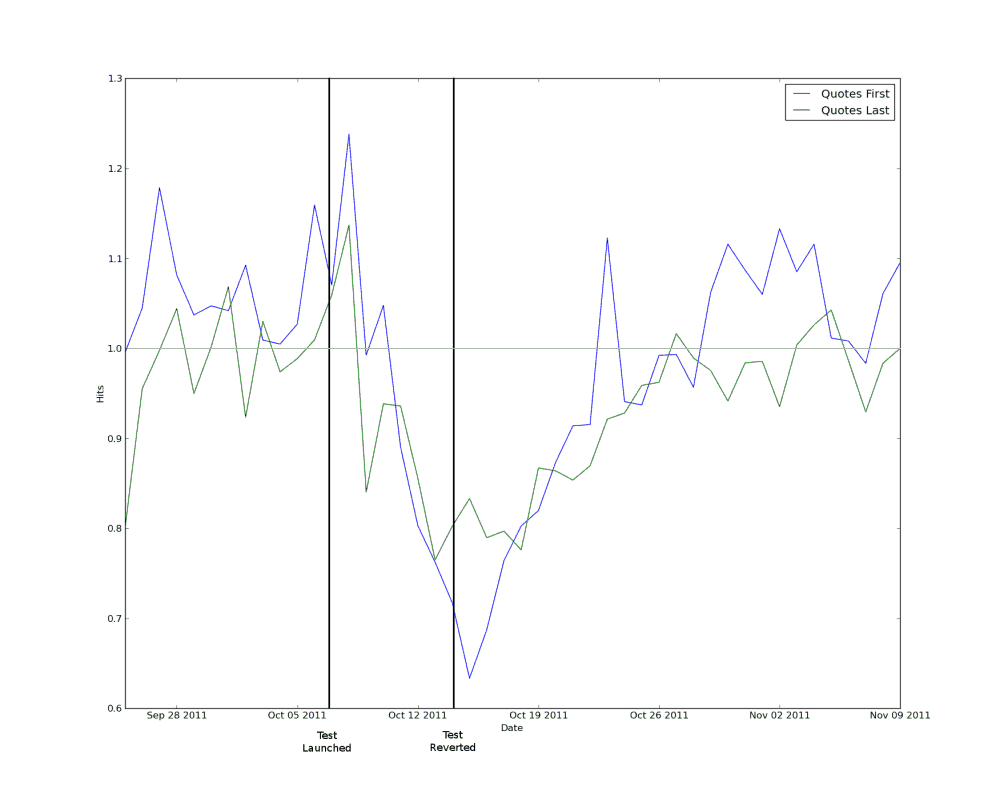

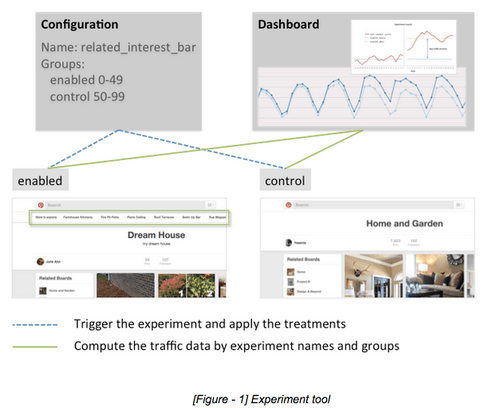

Etsy did it.

Thumbtack did it.

Pinterest did it, too.

The question is: how did they do it? Classic A/B or multivariate testing is impossible in SEO because you’re not dealing with users. You’re dealing with one search engine and a sample size of 1 doesn’t allow for comparison. It’s impossible to create laboratory environments for SEO. We cannot determine the impact of one single factor on the ranking of a page with 100% certainty. Google simply has too many ranking factors that work at the same time and overlap.

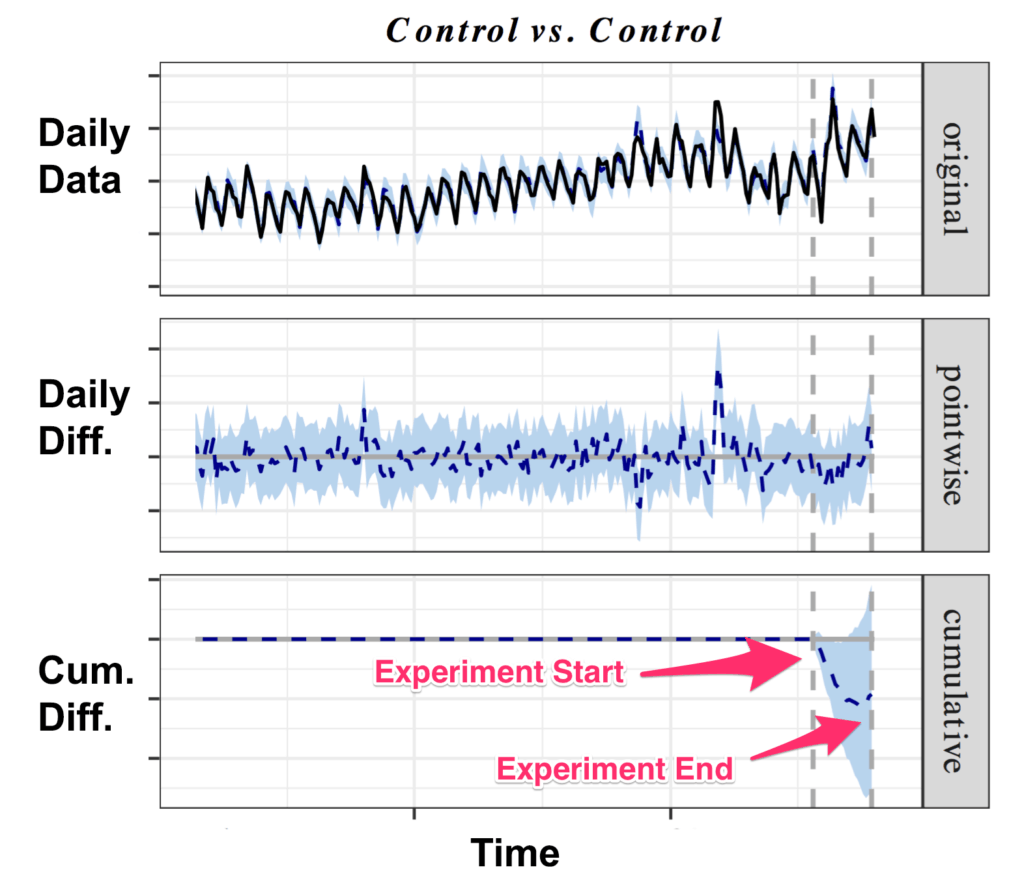

To solve this problem, we use paired t-testing. In a simplified version, this means

a) identifying pages with the same template

b) dividing them into groups (at least two)

c) making changes to one or some of the groups and

d) comparing them against a test group.

It’s important to compare the averages (means) of those groups against each other since they receive different amounts of traffic.

A couple more things to consider:

Taking a large enough set of pages and being reasonable of group sizes is crucial for the experiment’s success. If you have 1,000 pages in the set you can divide them easily into four groups or even 10. At the same time, 5 pages are not enough to test.

Leave enough time for the changes to take effect. Since pages have a different crawl frequency it will take Google longer to crawl some compared to others. Therefore, the changes you make to the test group(s) take some time to take effect. Wait for at least 14-21 days after having made the change(s) and after reverting the change. The experiment run-time also depends on the amount of traffic you get on these pages. With time, you get a better feeling for reasonable run time.

Don’t forget to revert the change you made to the test group(s). Otherwise, you cannot measure the exact impact of the ranking factor.

Document experiments for the afterworld / SEO scene / personal indulgence. Too often, I see great learnings that are not documented within companies because people are too lazy to do it.

Question your findings. Research (and testing) is a game of questions that never ends. Answers lead to more questions. Of course, you can act on findings from the test but you should never stop testing. It should become part of your DNA.

You should never stop testing. It should become part of your DNA.Click To Tweet

But testing is not the only thing we now have to do to thrive in this new environment. We can’t only look at ourselves. The growing trend of industry-specific ranking factors also makes it crucial to keep an eye on others.

Monitor your industry

More often than not, we can learn what works from the best in class. Monitoring your competitors does not only reveal opportunities and threats but also where the industry is going as a whole. In SEO, we look at market share in terms of keywords and rankings.

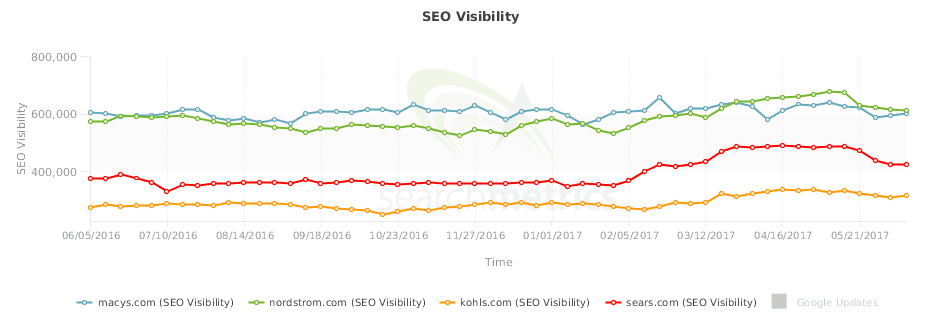

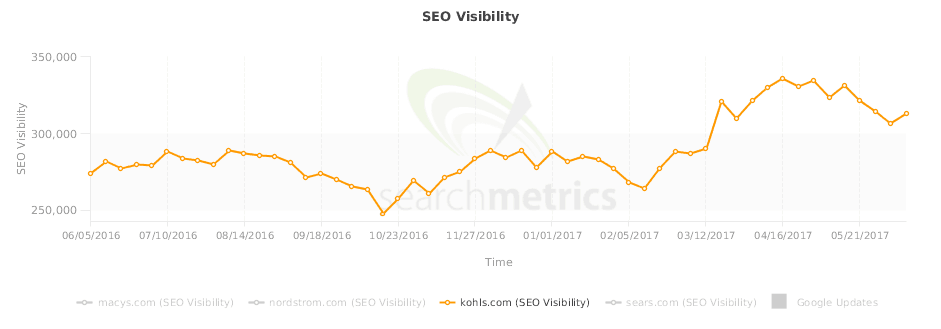

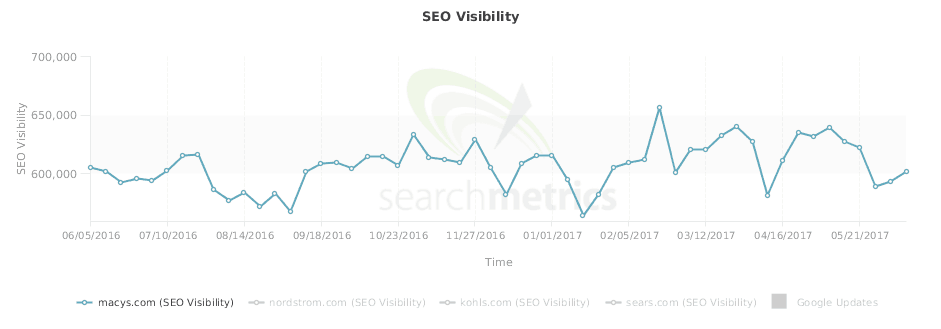

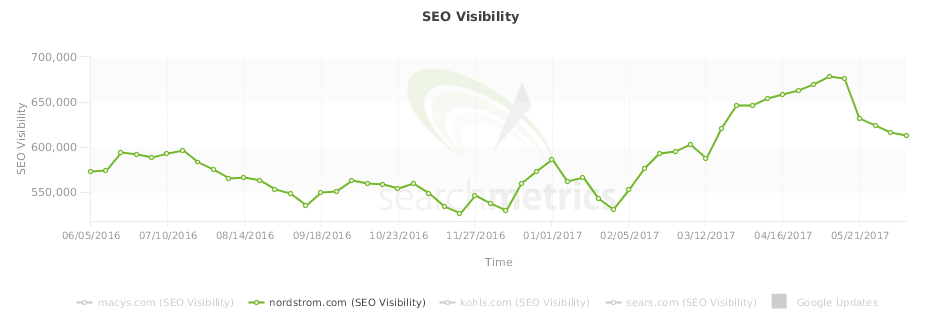

Take the fashion retail industry, for example. We have Macy's (blue), Nordstrom (green), Kohl's (orange) and Sears (red). Let’s focus on these for now. By looking at sheer SEO Visibility, which in itself doesn’t tell you much, we see that Macy's is about double as big as Sears.

Market trends become obvious when we zoom in a bit and look at the shifts on a keyword level. All players had an uptick of SEO Visibility starting on 01/29 and a fall starting on 05/14.

Well, that’s interesting.

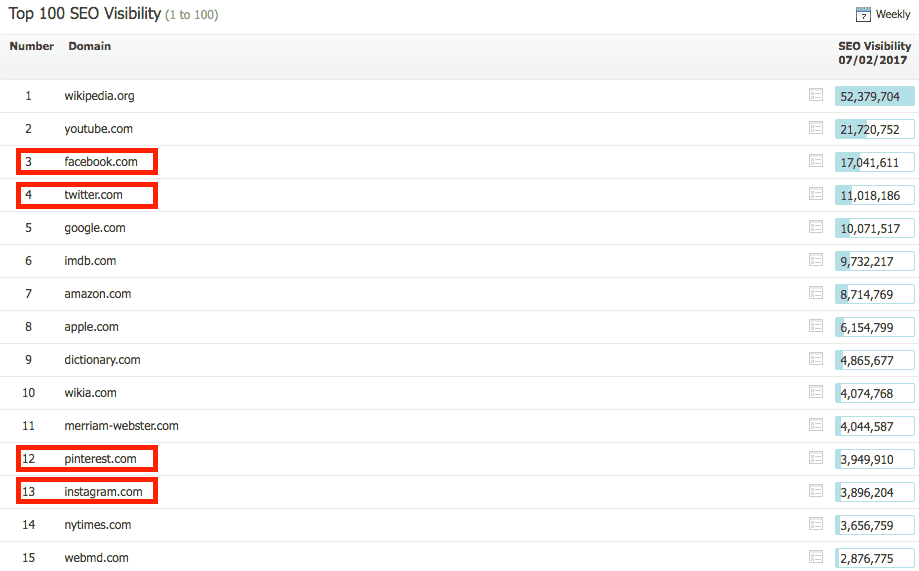

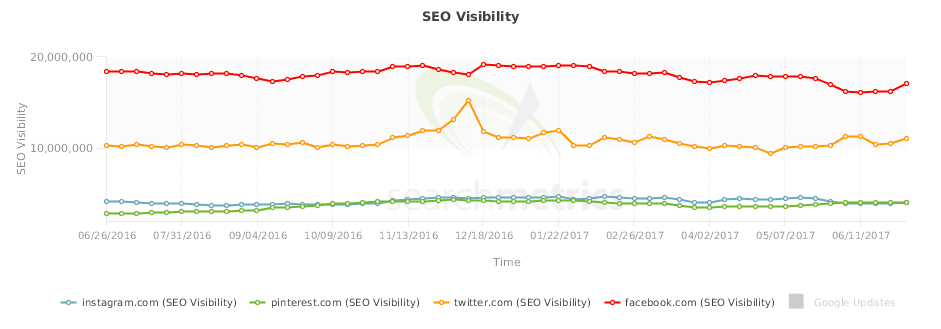

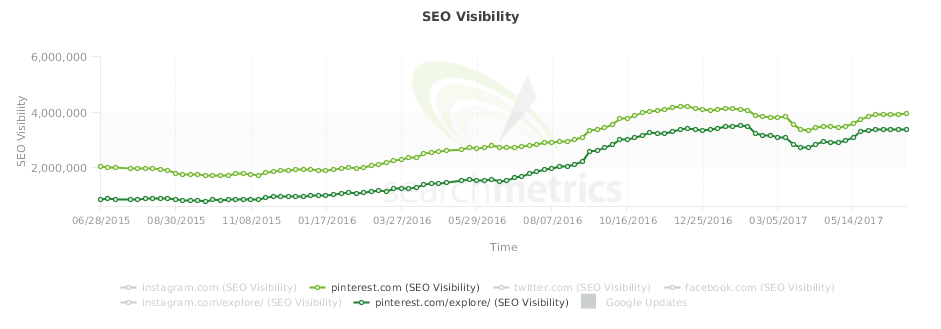

Let’s look at another example that showcases an even more interesting market shift. The social media industry has some of the largest sites in the world.

Facebook (red) is clearly leading the herd, followed by Twitter (orange) and Instagram (blue) and Pinterest (green) are having a close race for third place.

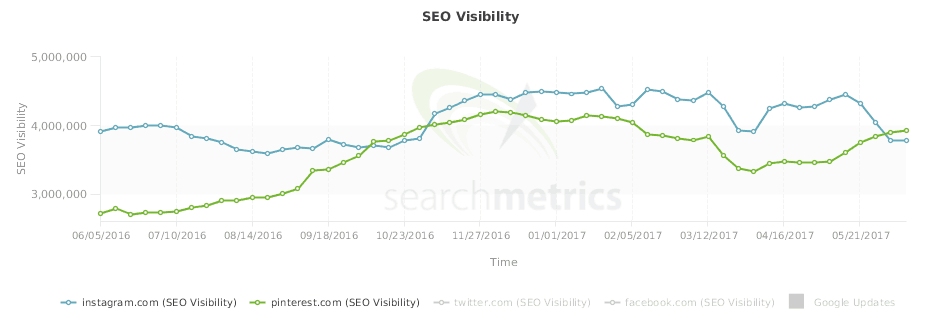

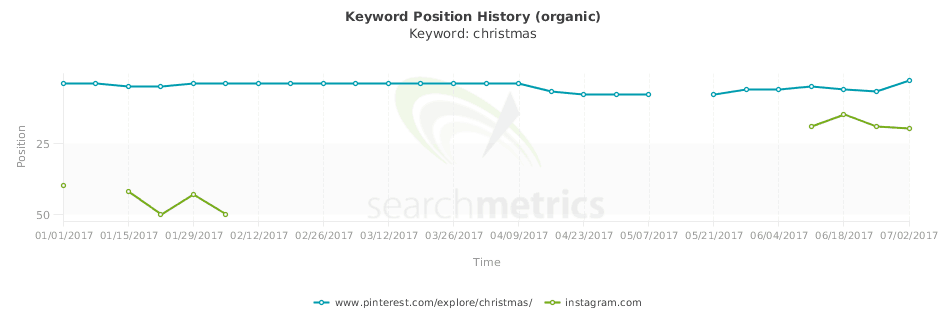

It turns out that Pinterest had passed Instagram in the first week of June.

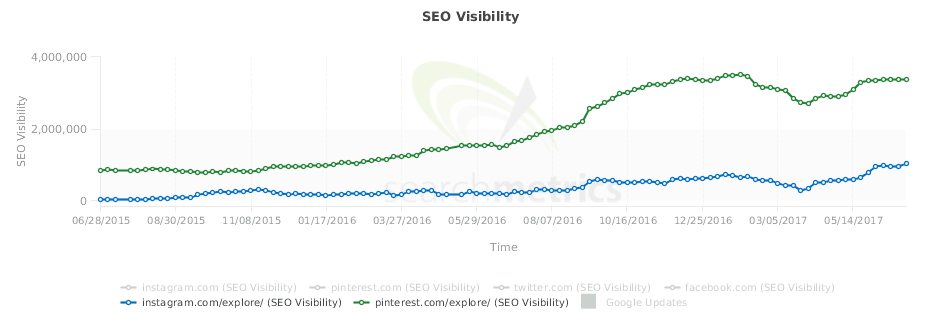

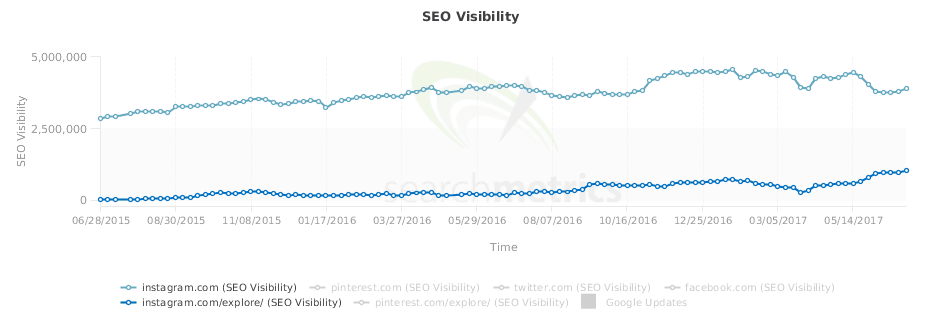

Both sites have “explore” subdirectories that contain pages on which they show collections of user-generated images for certain topics.

What’s important to notice is that the /explore/ subdirectory provides a huge chunk of rankings for Pinterest…

…but not for Instagram.

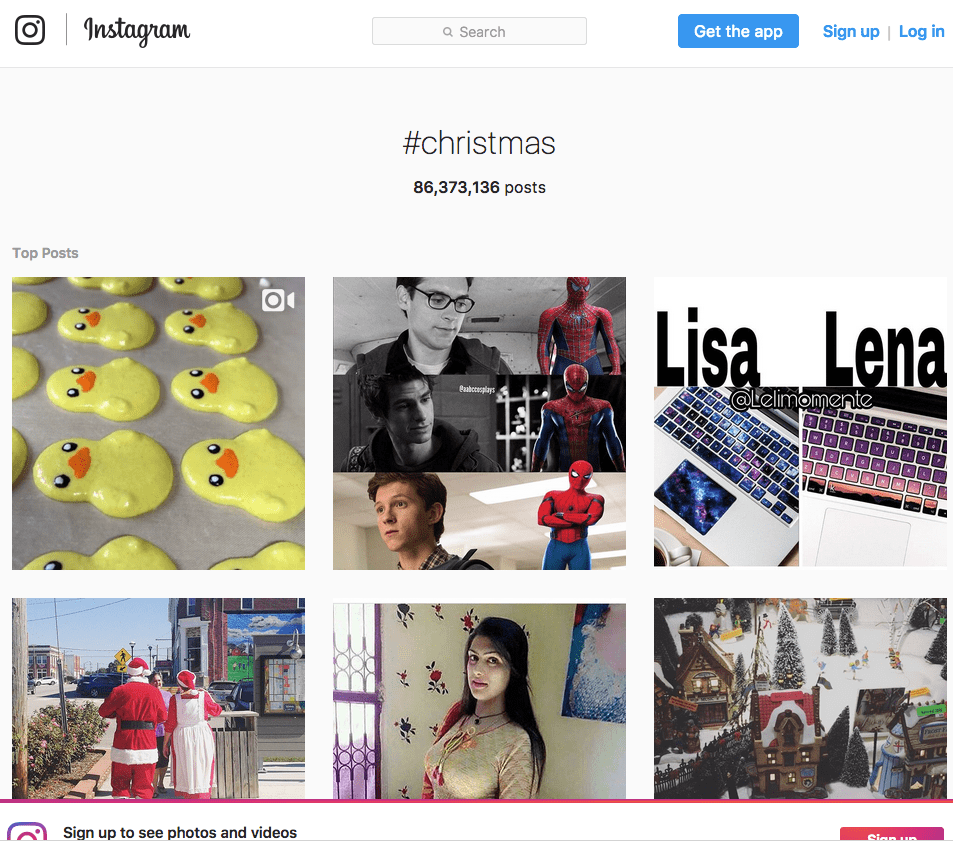

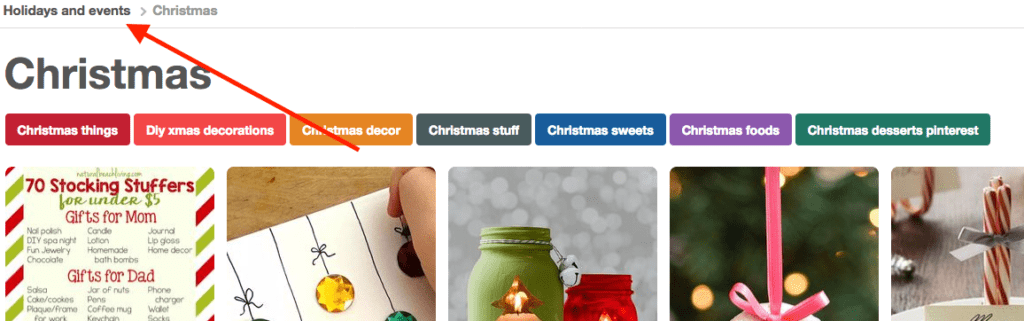

One of the topics both sites collect images for is “Christmas”. The Christmas page on Instagram looks similar to the one on Pinterest at first glance, but looking closer reveals some interesting details.

There are small, subtle differences that make a big difference. This could be the reason for why Pinterest is passing Instagram.

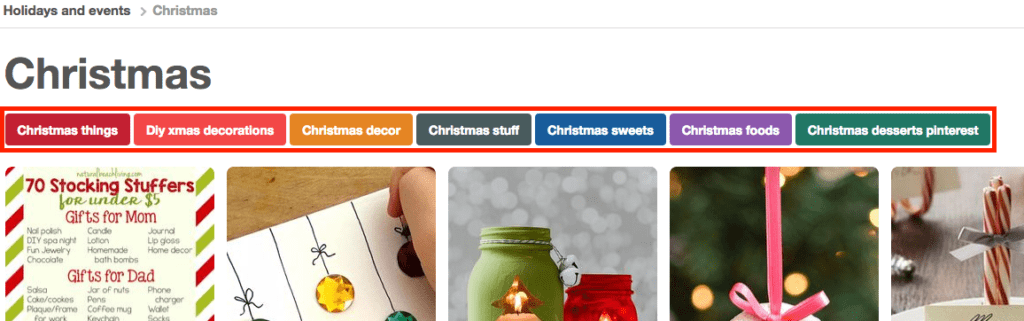

While Instagram has only the images, Pinterest does a much better job in linking the explore pages together to create topic hubs. Remember when I wrote about holistic content at the very beginning of this article? That’s exactly what Pinterest is doing here. They cover topics holistically.

You see the colored buttons under the headline of the page (“Christmas things”, “Diy xmas decorations”, “Christmas decor”, etc.). That’s the first layer: related topics / keywords. By covering all related sub-topics they increase their relevancy for the main topic (Christmas).

The breadcrumb navigation at the top of the page creates the second layer. You see it links to a higher level in the hierarchy, “Holidays and events”.

This shows how strategically Pinterest covers sub-, main and related topics. The strategy pays off big time. Instagram’s Christmas page doesn’t stand a chance against Pinterest’s, even though Instagram’s user base is 4x as big as Pinterest’s.

Imagine you’re a player in the social media industry. You need to know these things! They can give you a competitive advantage, which translates into Dollars.

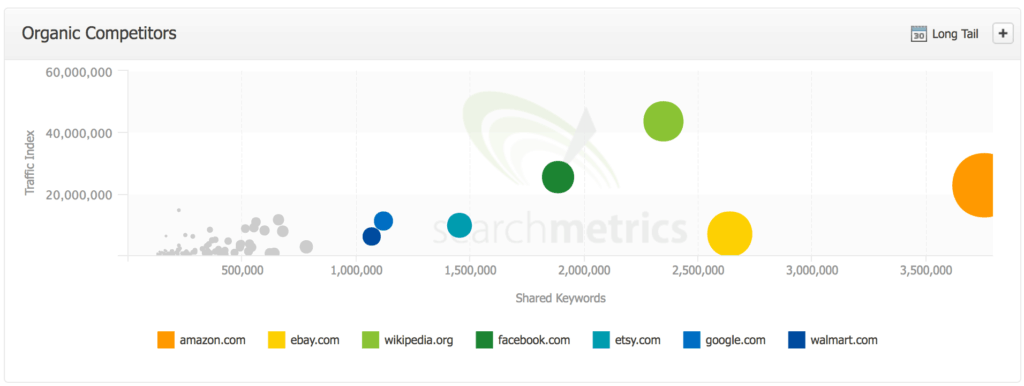

You get much more insightful data when you monitor your most important keywords against your competitors to understand your market share. If you were Pinterest this would tell you that you’re also competing with Instagram but Amazon, eBay, Wikipedia, etc.

Pinterest competes with online retailers now because more transactional keywords also have an inspirational user intent (assumption). It used to be that people either wanted to learn or to buy online, but nowadays people are skimming the internet to get inspired and find new things. It’s online window shopping.

You only come to these findings when you invest time monitoring your industry.

Tl;dr: Focus on user intent, holistic content, SEO testing and monitoring your industry

Machine learning will change SEO more fundamentally than Penguin and Panda did – but we’re not there yet.

AI is only used in small, specific use cases for organic search but its usage seems to be growing quickly. My hypothesis is that it will open many doors for Google to use data that was “too noisy” before. It will transform search and our lives in general [11].

To stay on top of SEO and still be successful in 2020, make sure to get the user intent right, measure user behavior and create holistic content. Make sure you have a close eye on your industry by tracking specific keywords, monitoring your competitors and testing which ranking factors apply to you. Finally, make sure to follow me on Twitter @Kevin_Indigand follow this blog to get regular updates.

PS: the last point is optional ;-).

Resources

https://arxiv.org/pdf/1705.08807.pdf

https://backchannel.com/the-deep-mind-of-demis-hassabis-156112890d8a

https://backchannel.com/google-search-will-be-your-next-brain-5207c26e4523

https://youtu.be/vzoe2G5g-w4?t=11m55s

http://papers.nips.cc/paper/5021-distributed-representations-of-words-and-phrases-and-their-compositionality.pdf

https://engineering.instagram.com/emojineering-part-1-machine-learning-for-emoji-trendsmachine-learning-for-emoji-trends-7f5f9cb979ad

https://research.googleblog.com/2016/05/announcing-syntaxnet-worlds-most.html

http://pages.searchmetrics.com/rs/656-KWJ-035/images/Searchmetrics%20Ranking%20Factors%20-%20Finance%20US%20-%202017.pdf

https://research.google.com/archive/large_deep_networks_nips2012.html

http://searchengineland.com/faq-all-about-the-new-google-rankbrain-algorithm-234440

https://www.whitehouse.gov/sites/whitehouse.gov/files/images/EMBARGOED%20AI%20Economy%20Report.pdf

https://www.youtube.com/watch?v=5lCSDOuqv1A

http://www.seobythesea.com/2017/05/how-does-google-look-for-authoritative-search-results/