Search On 2021 - The rise of visual indexing

Search On 2021 was full of highlights and important changes to Search. In this article, I describe how Google builds higher Walled Gardens and evolves into Visual Indexing.

How visual indexing pushes the evolution of content forward and why Google’s walled garden is growing

Google’s 2021 Search On event orbited around two themes: Visual Indexing and Walled Gardens. The Search team presented four Search improvements in an Apple-like keynote that highlight how Google indexes and serves visual content and keeps more traffic for itself instead of sending it to websites.

Visual Indexing

Richard Feynman, one of the most important physicists in history, is known for a story about naming versus meaning (source). One day, his father takes young Richard out to the woods and teaches him the names of all sorts of birds they encountered. He then points out that the name of the birds doesn’t tell you anything about them like what the bird eats, how it migrates, or where it finds a partner.

The message is that knowing the name of something does not equal understanding. The two are easy to confuse but important to differentiate.

Google Search faces a similar challenge. Indexing and ranking content in itself says nothing about its meaning. That’s why Google invests tremendous amounts of resources into Natural Language Understanding (NLU). Semantics is called one of the most complex AI problems.

No wonder then that the star of Search On 2021 is MUM (Multimodal Unified Model), an evolution of BERT and first presented at Google I/O in May 2021. Multimodality is an essential key component of MUM because it allows Google to index and understand content from various formats (text, images, videos, audio).

Looking at today’s most dominant social networks, Instagram, Youtube, and TikTok, Visual Indexing makes sense.

Search On shows an impressive example of MUM in action when it shows suggestions of videos that are related to a video that are based on understanding context instead of the title or other explicit call-outs. MUM can understand related content simply from the context in the video itself and other content on the web (text, images, entity graph, etc.).

That’s just one of four improvements Google presented at Search On. The fact that MUM drives all four new features shows how far advanced the technology is. We are entering a new paradigm in which Google uses semantics (implicit meaning) from images and videos in Search to create a more holistic understanding of topics.

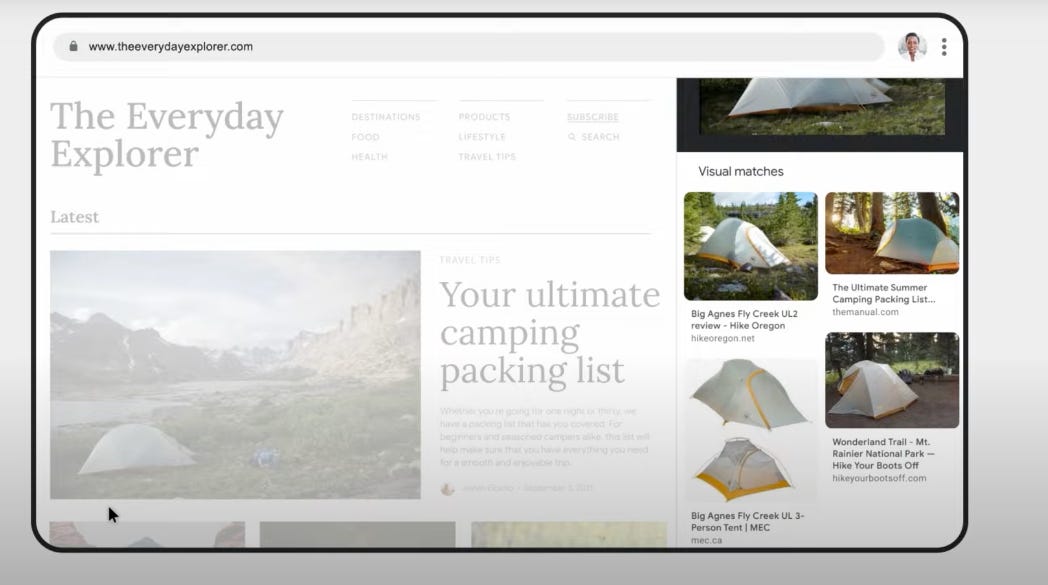

But there is another important evolution of Search that’s easy to miss: Google Lens. You can now use Lens to take pictures on your phone or computer and indicate to Google what you want to learn more about. Whether fixing a bicycle or something else, taking a snapshot with Lens provides Google more context to return relevant results. The idea of using an assistant to enrich your query is just as revolutionary as Visual Indexing itself.

For the longest time, Search was a query experience. Now, Google branches into gestures that provide more context.

The one question that remains is how widespread the adoption of Google Lens will be, which in return depends on the quality of the feature. The problem, not knowing what to call something or how to phrase a query, is a big one. However, voice search created huge hopes and has only disappointed so far as a channel for marketers.

Walled Gardens

Prabhakar Raghavan, Google’s head of Search, Ads, and Assistant has been particularly vocal about the fact that Google still sends traffic to websites at Search On.

Over the past year, we've sent more traffic to the open web than any prior year.

Every day, we send visitors to well over 100 million different websites, and every month, Google connects people with more than 120 million businesses that don't have websites, by enabling phone calls, driving directions and local foot traffic.

“How AI is making information more useful”

What he hasn’t commented on is what baseline traffic to websites is compared to. Is it more traffic because more people use Search? And what is the open web?

It almost feels like Google wants to get ahead of the controversy Search On has already sparked days after the event: Google keeps more traffic to itself.

Besides inspiration and information from videos, Google announced three more features:

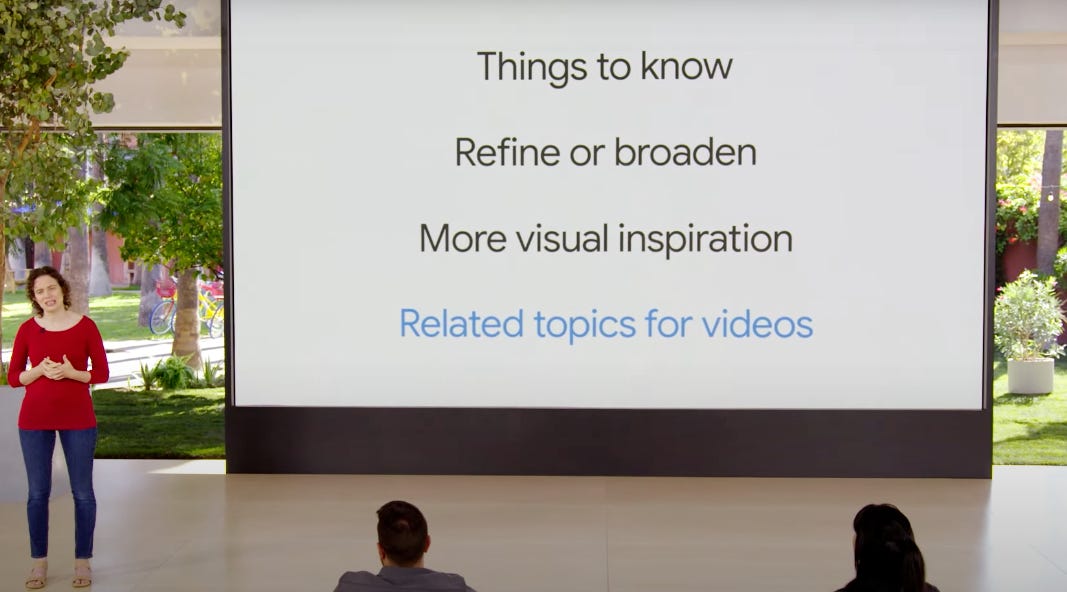

Things To Know

Search refinements

More visual inspiration

“Things To Know” (TTK) is a hybrid feature of People Also Asked (PAA) and Featured Snippets that seems to live within knowledge panels. TTK still seems to link to a source but shows the most important pieces of information without having to click through.

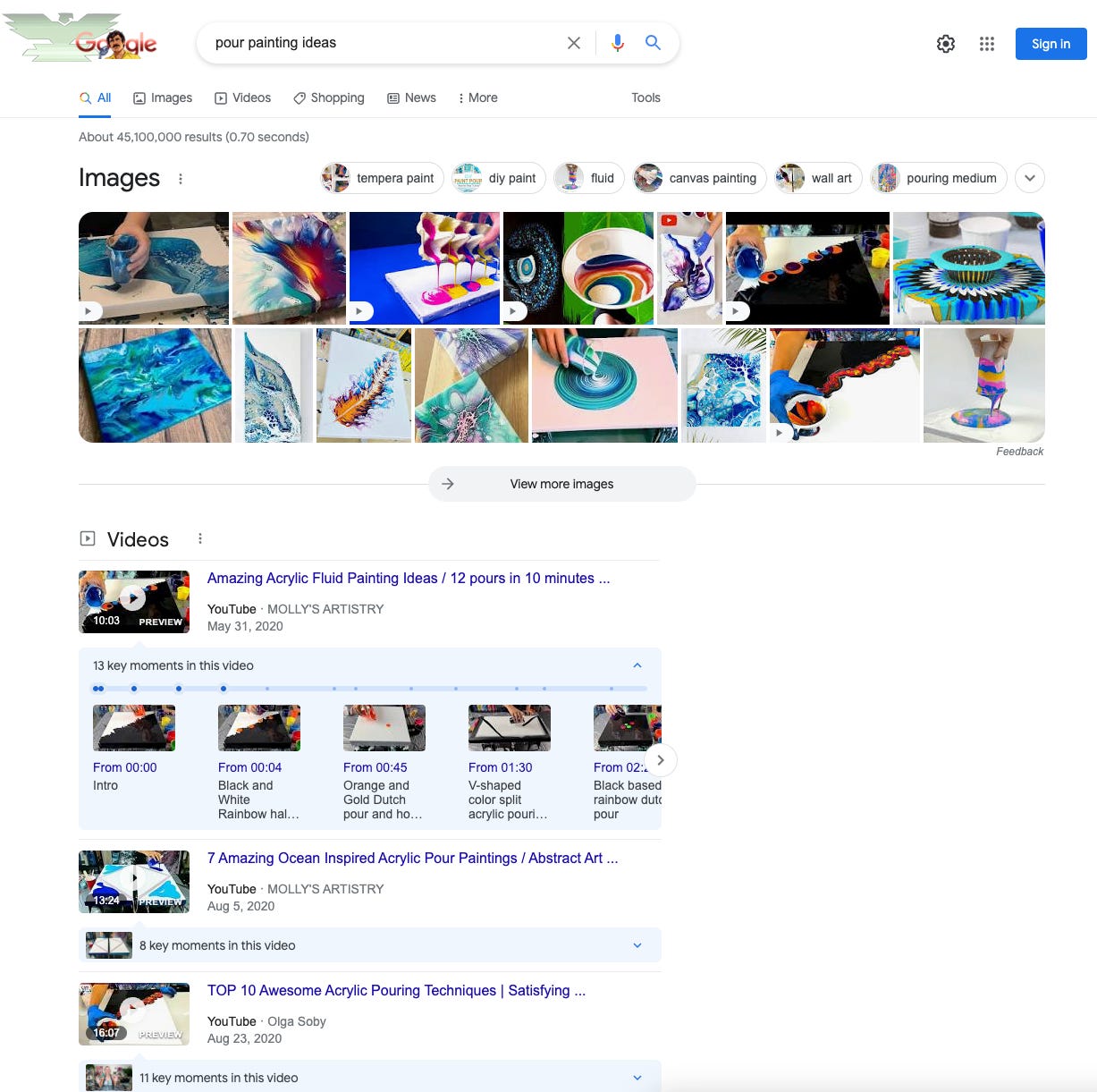

On mobile devices, knowledge panels already take up the space right after ads. I expect TTK to take up even more space. The mobile SERP for “acrylic painting”, which is used as an example by Google for the new TTK feature, is actually a good showcase of how low classic organic results appear.

Paired with Youtube videos, People Also Search For features and PAAs, traffic to non-Google web pages is getting thin.

The second feature Google introduced is “Broaden This Search”/”Refine This Search” that lets users modify their search with one click.

Google has been showing “query bubbles” in the SERPs for a while but now proactively helps users navigate through search. Search precision is a long-standing issue in information retrieval raising questions like “do you want to include all relevant results or only the most relevant ones?” or “do you allow users to discover new topics and products or narrow results to what they specifically look for?” One benefit for Google is that search refinements increase the pageviews of ads. So does the third feature.

Google will start showing larger image carousels and videos at the top of search results for queries with inspirational intent. Spoiler alert: clicking on an image in the larger carousels sends you to an image search with ads at the top.

In December 2020, I wrote an article about image SERP features and how they impact organic traffic. In short, the more images Google shows in the search results, the less traffic websites get. The correlations between an image box and traffic to websites were strongly negative (~-0.7 on mobile and desktop), which is rarely seen in the SEO world.

Squeezing more revenue out of ads

At the end of the day, Google needs to drive more revenue, most of which still comes from ads. The longer it can keep users on its properties and the more often they return, the better. Google doesn’t squeeze users, but certainly websites that provide the content Google displays in the search results. The Walled Garden grows. Customer acquisition becomes more expensive.

What can webmasters and SEOs do? Diversify the keywords they go after to target less shorthead and more longtail. Create more visual content to appear in image and video carousels. Build strong brands to drive direct traffic.